Using LXC 1.0.11 installed from @epel on CentOS 7.8.

LXC NAT networking fully works. Goal is to have bridged networking and have the LXC container on the same subnet as the host OS.

“ping” to the started container from the host OS and vice versa does not work, however.

That’s my setup:

“lxcbr0” on the host OS is bridged to enp0s8 which has IP 192.168.75.109.

The container gets IP 192.168.75.129.

The container config has:

lxc.utsname = bnode091

lxc.network.type = macvlan

lxc.network.flags = up

lxc.network.link = lxcbr0

lxc.network.name = eth0

lxc.network.hwaddr = fe:42:d3:10:b5:66

lxc.network.ipv4.gateway = 192.168.75.109

lxc.network.ipv4 = 192.168.75.129/24

In the container I have:

[root@bnode091 ~]# ifconfig -a

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.75.129 netmask 255.255.255.0 broadcast 192.168.75.255

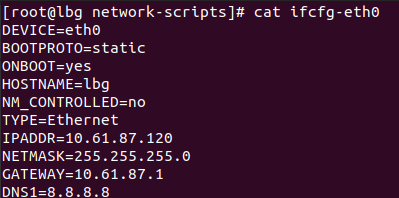

[root@bnode091 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

BOOTPROTO=192.168.75.129

ONBOOT=yes

NM_CONTROLLED=no

TYPE=Ethernet

[root@bnode091 ~]# netstat -nr

Kernel IP routing table

Destination Gateway Genmask Flags MSS Window irtt Iface

0.0.0.0 192.168.75.109 0.0.0.0 UG 0 0 0 eth0

169.254.0.0 0.0.0.0 255.255.0.0 U 0 0 0 eth0

192.168.75.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

What do I miss to get this working?