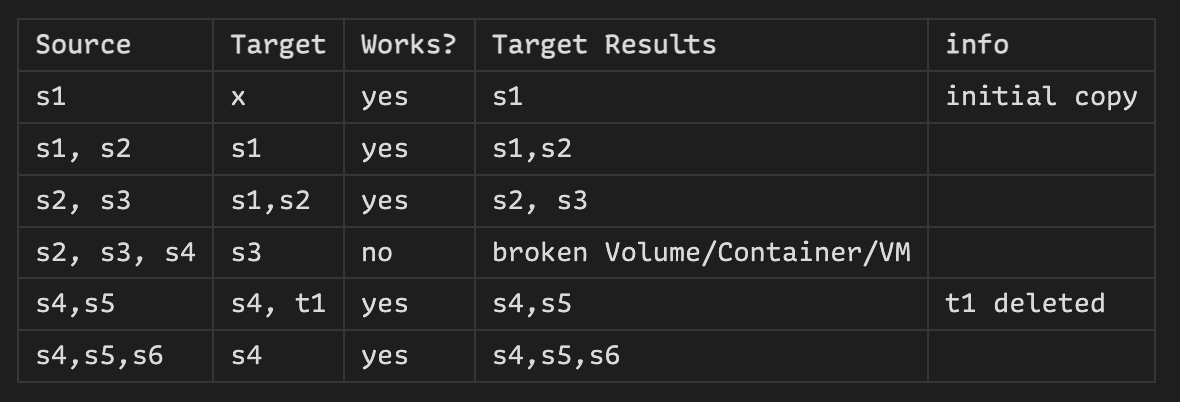

I would like to run an IncusOS backup server on which I can back up all the VMs/containers and volumes on my server. Now that incremental updates of custom volumes are working, I’ve been looking into backing up containers and VMs. In doing so, I noticed something that I couldn’t find in the documentation. I’m not sure if this is a problem that only occurs for me.

The behavior I observed occurs with containers as well as VMs.

Case 1:

I have a container without snapshots. I copy it with

incus copy server:c1 backup:c1

This works so far. Now I want to make another incremental backup later.

incus copy server:c1 backup:c1 --refresh

I get the following error message:

Error: Error transferring instance data: Failed migration on target: Failed creating instance on target: Failed receiving volume “c1”: Failed to run: zfs receive -x mountpoint -F -u storagePool/storage/containers/c1: exit status 1 (cannot receive new filesystem stream: zfs receive -F cannot be used to destroy an encrypted filesystem or overwrite an unencrypted one with an encrypted one)

Case 2:

Same starting point, no initial snapshot. First copy works fine. Then I create a snapshot (s2) and try again:

incus copy server:c1 backup:c1 --refresh

This fails again with mostly the same error message:

Error: Error transferring instance data: Failed migration on target: Failed creating instance on target: Failed receiving snapshot volume "c1/s2": Failed to run: zfs receive -x mountpoint -F -u storagePool/storage/containers/c1@snapshot-s2: exit status 1 (cannot receive new filesystem stream: zfs receive -F cannot be used to destroy an encrypted filesystem or overwrite an unencrypted one with an encrypted one)

Case 3:

I create a snapshot (s1) and after that I perform the initial copy to the backup server, without explicitly mentioning the snapshot.

incus copy server:c1 backup:c1

After that I make a second snapshot (s2) and perform a refresh:

incus copy server:c1 backup:c1 --refresh

This works fine and it syncs the second snapshot (s2) incrementally to the backup server.

Case 4:

With an initial snapshot (s1), it works. If I create the first snapshot before I copy the container, I can perform a –refresh without a second snapshot.

All in all, it works. However, I would have liked to have a little more information on the whole topic. I am also happy to write documentation texts on this.

However, I am not entirely sure whether this behavior is intentional?

I would have expected that when copying for the first time without an explicit snapshot, one would be created automatically, as in case 4. Except that in that case, the second snapshot is automatically created.