I have a LXC 3.0.3 version on Ubuntu 18.04.6 LTS. In which it has a default pool 100G. I want to increase it to be 200GB.

gpsemc@lxd15:~$ lxc storage show default

config:

size: 100GB

source: /var/lib/lxd/disks/default.img

zfs.pool_name: default

description: “”

name: default

driver: zfs

used_by:

- /1.0/containers/***

- /1.0/containers/xyz

- /1.0/containers/Ubt20

- /1.0/containers/Ubuntu1804

- /1.0/containers/xyz1

- /1.0/containers/VMWXUBUNTU20

- /1.0/containers/buildEnvForXX

- /1.0/containers/buildEnvForXXX2004

- /1.0/containers/XZZ

- /1.0/containers/zZZi

- /1.0/images/4b350c31e7047b82533133c924f13bed8342f91b502ed9012395e4afbfebe9a7

- /1.0/images/ade430c33554a285f2427abbecb7f216e54dc9bc640641f9b4bf1fe267447c28

- /1.0/images/d58d3dcfc3d14ead8bf4b9ec85c3799b3fc5cb8e0b427e9d6813e226f9cee202

- /1.0/images/e3e1bd82cdc7fa1256cf2409dd8543630eefa1fca631ff0c78c0970babddc69f

- /1.0/images/e53fd879c785d18e7d4a8115dc57c45aa4104f339cac929e82670b9a721cc300

- /1.0/profiles/default

status: Created

locations: - none

I tried to resize it but seems not taken effect.

I run below command:

sudo truncate -s +100G /var/lib/lxd/disks/default.img

gpsemc@lxd15:~$ lxc storage list

±-----------±------------±-------±----------------------------------±--------+

| NAME | DESCRIPTION | DRIVER | SOURCE | USED BY |

±-----------±------------±-------±----------------------------------±--------+

| default | | zfs | /var/lib/lxd/disks/default.img | 16 |

±-----------±------------±-------±----------------------------------±--------+

| secondpool | | zfs | /var/lib/lxd/disks/secondpool.img | 0 | (Not used. I just want to see whether I can expand default or migrate to a secondpool)

±-----------±------------±-------±----------------------------------±--------+

gpsemc@lxd15:~$ sudo ls -l /var/lib/lxd/disks/

[sudo] password for gpsemc:

/var/lib/lxd/disks/:

total 97521464

-rw------- 1 root root 207374182400 May 29 12:40 default.img The size looks growed here…

-rw------- 1 root root 200000000000 May 29 11:54 secondpool.img

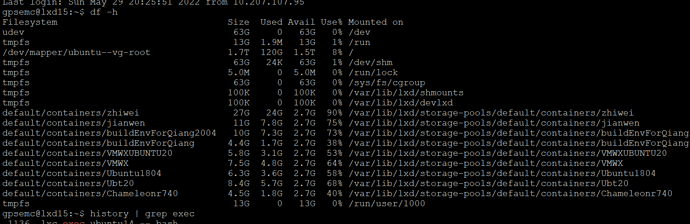

It seems the /var/lib/lxd/disks/default.imgdisk has become 200G. But when I execute “lxc storage show default” it still show 100GB.

Any idea?