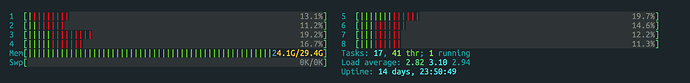

We noticed today that containers with resource limits (specifically, noting this for RAM and CPU) are still displaying access to the full system’s resources. Below is the htop output for a container that is limited to 2GB of RAM and 4 CPUs:

(note that it also show’s the system’s uptime)

Here is the applicable config for a container where we are seeing this:

devices: [2/218]

eth0:

limits.egress: 15Mbit

limits.ingress: 25Mbit

nictype: bridged

parent: lxdbr0

type: nic

root:

limits.read: 10MB

limits.write: 10MB

path: /

pool: default

size: 421MB

type: disk

ephemeral: true

profiles:

- default

- small

- small-hard

The small profile contains:

$ lxc profile show small

config:

limits.cpu: 2-5

limits.cpu.allowance: 5%

limits.cpu.priority: "1"

limits.disk.priority: "1"

limits.memory: 256MB

limits.memory.enforce: soft

limits.network.priority: "1"

limits.processes: "500"

...

The small-hard profile contains:

$ lxc profile show small-hard

config:

limits.memory: 2048MB

limits.memory.enforce: hard

...

And these are applied:

$ lxc profile show small | grep production-68e39679e31cbf00-1563902440813

- /1.0/containers/production-68e39679e31cbf00-1563902440813

$ lxc profile show small-hard | grep production-68e39679e31cbf00-1563902440813

- /1.0/containers/production-68e39679e31cbf00-1563902440813

We’ve tested maxing out the memory of the container to 99% of full:

stress --vm-bytes $(awk ‘/MemAvailable/{printf “%d\n”, $2 * 0.99;}’ < /proc/meminfo)k --vm-keep -m 1

This command is killed by the system once it maxes out. As far as we can tell this still only maxes the RAM usage for the container, not for the host, so the limits are still working.

We’re guessing this is part of 3.15 because we only just noticed it and it’s something that we likely would have noticed had it happened in an earlier version.

We’re a compute reseller so users being able to see this is not ideal. Please let us know what other information we can provide.