Weekly status for the week of the 28th November to the 2nd December.

Introduction

This past week saw improvements to LXD’s DNS zones functionality, and several bug fixes, including a fix for a regression in the LXD 4.0 LTS snap VM functionality (see below).

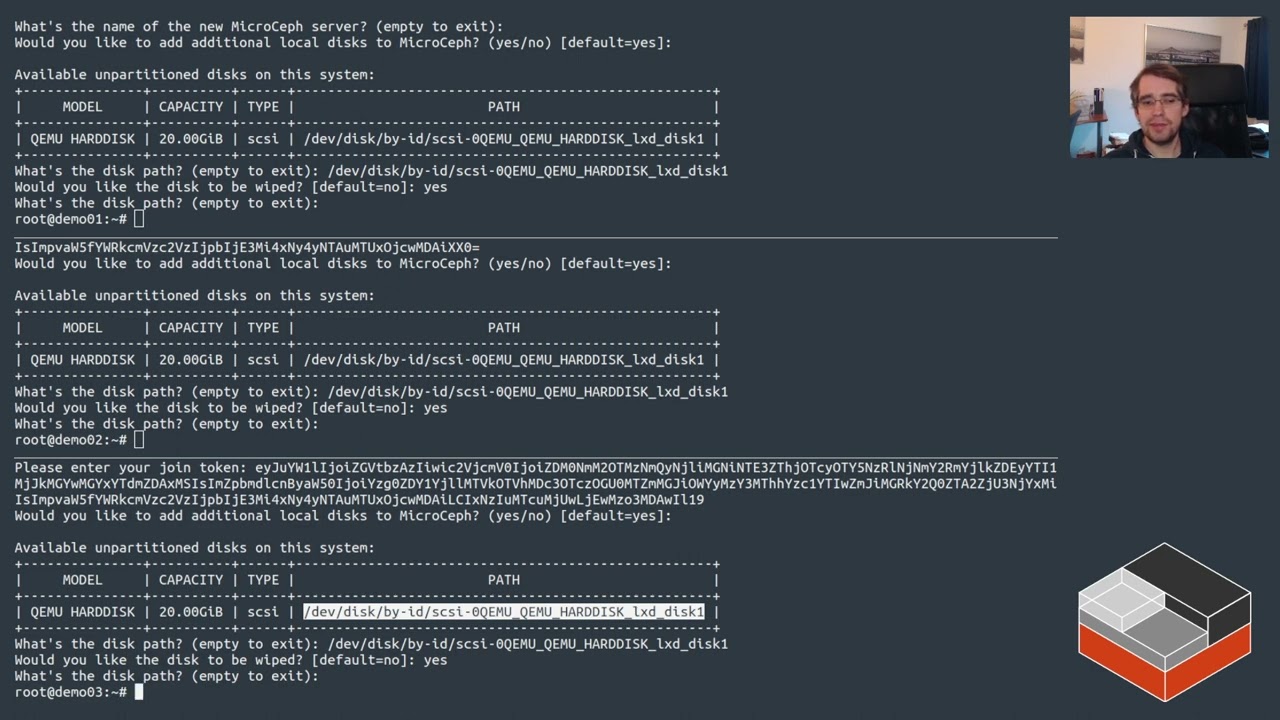

MicroCeph demo

A slightly different type of video from @stgraber this week. Instead of covering LXD, this week he is introducing the new MicroCeph package we have been working on. This is a snap-based packaging of Ceph combined with a clustering layer similar to LXD’s, which allows for very easy clustering and management across multiple systems. This complements LXD by providing a distributed storage layer it can use.

For more info see Introducing MicroCeph

LXD 4.0 LTS snap VM issue:

A recent dependency update to QEMU 7.1.0 in the 4.0/* snap channels (in order to maintain security updates from upstream) prevented VM instances from being started. This has now been fixed in revision 4.0.9-a29c6f1 2022-12-04 (24061). The fix was to backport a change to the sandboxing of QEMU that was already present in the LXD 5.0 LTS and 5.x series.

Job openings

Canonical Ltd. strengthens its investment into LXD and is looking at building multiple squads under the technical leadership of @stgraber.

As such, we are looking for first line managers (highly technical) and individual contributors to grow the team and pursue our efforts around scalability and clustering.

All positions are 100% remote with some travel for internal events and conferences.

For more info please see LXD related openings at Canonical Ltd (2022-2023)

LXD

Improvements:

- Added support for per-project DNS zones even when sharing a network from the default project. This enables the creation of DNS zones that give a project specific “view” of addresses on a shared network. This required the introduction of a

features.networks.zonesproject setting, that when enabled, causes DNS zones to be associated to the current project rather than the project that new networks would be associated to (which was the old behaviour). Additionally networks can now be associated to multiple DNS zones (at most one per project) as thedns.zone.forwardsetting now accepts a comma-separated list of zone names. See Linux Containers - LXD - Has been moved to Canonical for more information. - Related to adding support for DNS project zones above, the

bridgeandovnnetwork types now include their own gateway addresses in thelxc network list-leases <network>command (but only when viewing them within the context of the network’s own project). Similarly thebridgenetwork type now only includes downstream OVN uplink router addresses in its leases list when viewing them within the context of the bridge network’s own project. This is so the dynamic DNS zone records and thelist-leasesoutput are consistent with each other. - Reviewed, reworked and re-ordered instance device documentation.

- Two minor snap related improvements to the documentation.

- Updated the

qemu-imgAppArmor profile to prevent some denials that were causing unnecessary log messages.

Bug fixes:

- Fixed a bug where restoring a custom volume snapshot inside a restricted project could fail because it was incorrectly checking the snapshot’s size against the project’s restrictions. As this is not necessary and is not what happens when instance snapshots are restored, the ordering has now been changed to skip size restriction checks when restoring custom volume snapshots.

- Fixed a regression introduced by the recent move away from the

fsnotifypackage to using theinotifypackage. When using clustering with the bridge network in fan mode LXD uses theforkdnsprocess to provide cluster level DNS. It watches for cluster member address changes written to a local config file and reloads its config automatically. With the move to theinotifypackage we were incorrectly watching for “all events” on this file, and this caused the action of reading the contents to trigger an event. This lead to an event loop that continually reloaded theforkdnsprocess, causing high CPU and many log messages. This has now been fixed by only watching for when the config file is changed.

LXC

Improvements:

- Turn unprivileged container rootfs into a shared mount in a separate peer group. This is because most workloads do rely on the rootfs being a shared mount. For example, systemd daemons like sytemd-udevd run in their own mount namespace. Their mount namespace has been made a dependent mount (MS_SLAVE) with the host rootfs as it’s dominating mount. This means new mounts on the host propagate into the respective services.

LXCFS

Bug fixes:

- Fix

/proc/cpuinfoso it honours the CPU personality inside container.

YouTube videos

The LXD team is running a YouTube channel with live streams covering LXD releases and weekly videos on different aspects of LXD. You may want to give it a watch and/or subscribe for more content in the coming weeks.

Contribute to LXD

Ever wanted to contribute to LXD but not sure where to start?

We’ve recently gone through some effort to properly tag issues suitable for new contributors on Github: Easy issues for new contributors

Upcoming events

- FOSDEM 2023 4th-5th February 2023. See FOSDEM 2023 containers devroom: Call for papers

Ongoing projects

The list below is feature or refactoring work which will span several weeks/months and can’t be tied directly to a single Github issue or pull request.

- Stable release work for LXC, LXCFS and LXD

Upstream changes

The items listed below are highlights of the work which happened upstream over the past week and which will be included in the next release.

LXD

- doc/instances: clean up devices overview section

- Networks: Support multiple zones per network and zones associated to their own projects

- Test tweaks

- ceph: Drop unnecessary volume.block.* config keys

- More shellcheck fixes and other tweaks

- Storage: Don’t check project limits when doing a volume snapshot restore

- Network: Exclude gateway and uplink addresses in leases list when filtering by project other than network’s

- doc: add link to Running in production YouTube video

- docs: Added snap version directory for local remotes

- Networks: Use project.NetworkZoneProject in networkZonesGet

- lxd/apparmor: fix AppArmor profile for qemu-img

- lxd-generate: Generate boilerplate functions

- forkdns: Updates serversFileMonitor to only watch for inotify.InMovedTo event

- doc: Adds

--cohort="+"to snap refresh command

LXC

LXCFS

Distrobuilder

- Nothing to report this week

LXD Charm

- Nothing to report this week

Distribution work

This section is used to track the work done in downstream Linux distributions to ship the latest LXC, LXD and LXCFS as well as work to get various software to work properly inside containers.

Ubuntu

- Nothing to report this week

Snap

- Nothing to report this week